YWR GP: AI's Compounding Divide

The acceleration of those who reorganise around intelligence, the deepening cost of those who don't, and what Schumpeter would make of a gale which does not converge.

Banger AI thought piece #4 from Pancras Beekenkamp.

* strictly my personal views only *

On 27 February 2026, Jack Dorsey wrote to Block’s shareholders that “intelligence tool capabilities are compounding faster every week.” Five days later, Bloomberg News reported that Morgan Stanley was eliminating about 3% of its global workforce — roughly 2,500 people — targeting employees across investment banking, trading, wealth management, and asset management, following a year in which the firm had posted record net income of USD16.9bn and bumped CEO Ted Pick’s pay by 32%. Neither announcement was a distress signal. Both were declarations of acceleration by a cohort of firms that has already crossed a threshold and is now, with some deliberateness, increasing the distance between itself and those that have not.

Dorsey’s fuller formulation deserves to be read carefully: “I think most companies are late. Within the next year, I believe the majority of companies will reach the same conclusion and make similar structural changes. I’d rather get there honestly and on our own terms than be forced into it reactively.” He is not describing disruption arriving from outside. He is describing a firm that has been accelerating internally for two years and has now decided to make that acceleration structural — to remove the organisational mass that was justified by a world of slower tools, before those tools compound sufficiently that the mass becomes impossible to shift without crisis. Block cut over 4,000 roles, as Bloomberg reported, from a workforce that had tripled during the pandemic years and was generating gross profit of USD10.36bn, up 17% year on year. The announcement was not the moment of transformation. It was the moment the transformation became legible on a headcount spreadsheet.

Morgan Stanley’s insiders were, if anything, more candid. As the New York Post’s Charles Gasparino reported on 7 March 2026, sources inside the firm confirmed that the cuts were driven primarily by AI tools replacing back-office functions — “management just launched an awesome AI program with ChatGPT in the wealth management division,” one executive said, “lots of back offices getting the axe in this.” Morgan Stanley had simultaneously commissioned research estimating that AI and branch closures would eliminate 200,000 banking jobs across the European Union alone by 2030, with efficiency gains of up to 30% available to banks that fully deployed the technology. The bank had quantified the structural threat in February and enacted the first phase of it in March. This is not the behaviour of an industry surprised by a new technology. It is the behaviour of an industry whose early movers have been compounding for long enough that the structural consequences are now too visible to defer.

Joseph Schumpeter, writing in Capitalism, Socialism and Democracy in 1942, described the essential mechanism of capitalist progress as a perennial gale — not a gentle breeze of marginal improvement but a storm that continuously revolutionises the economic structure from within, destroying the old whilst creating the new. He was describing something that played out over decades: steam engines replacing artisans, assembly lines displacing craftsmen, computing absorbing clerical labour. What the opening weeks of 2026 reveal is a gale operating at a speed Schumpeter did not anticipate — and with a feature he did not fully map. The destruction and the creation are not sequential. They are simultaneous, often within the same institution, and the gap between those who reorganised around intelligence early enough and those who have not is widening not linearly but geometrically. The compounding divide is not a metaphor. It is a mechanism, and understanding it requires going back to a constraint that precedes every question of AI strategy.

This note traces that proposition through five movements. It begins with the precondition — the data architecture constraint that I have been examining throughout the Sovereign Index series — which determines which side of the divide a firm inhabits before any choice about tooling is made. It then examines the early adopter cohort from the inside: Klarna’s overshoot and what the correction revealed; Shopify’s harder version of the same lesson; and what Theo, the developer and founder behind t3.gg, demonstrated when he built a functional alternative to a USD1.3bn enterprise platform in two weeks, writing zero lines of code manually. From there the note turns to the federal government of the United States — the largest experiment in headcount reduction without architectural preparation in recorded history, and its instructive failure. It then confronts the arithmetic of what the early cohort’s compounding advantage now means, illustrated most sharply by what Microsoft’s relationship with Anthropic reveals about the pace at which even a frontier firm struggles to keep up with itself. And it closes by naming, with the precision the evidence permits, who is winning, who is losing, and what remains possible for those who have not yet moved.

The Precondition

Those who have followed this series will recognise the argument before it is stated. In IT Spaghetti in October 2025, I described what I called the biological tax: the premium paid for the human eye to act as the missing API between fragmented systems that could not communicate directly. In Facing the Innovator’s Dilemma in January 2026, I drew the harder implication: that the annual seat fee — the revenue model that has defined enterprise software and financial data for forty years — was in many firms not a charge for intelligence delivery but a charge for human cognitive mediation of an architecture that had never been designed to operate without it. The human existed because the data could not move cleanly. The seat fee existed because the fragmentation was so entrenched that biological bandwidth had become the only available interface between system and outcome.

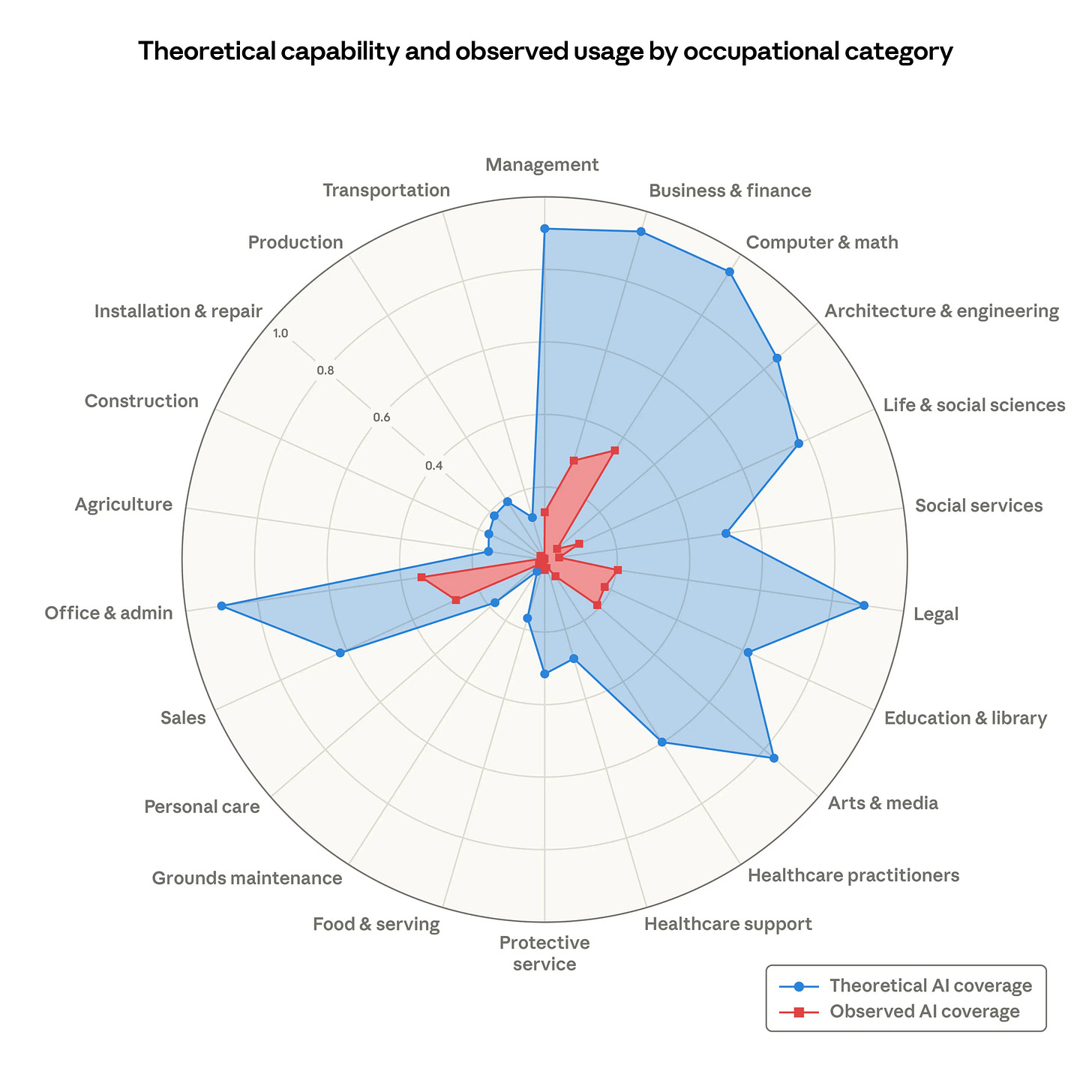

That condition turns out to be universal, and it is the primary determinant of which side of the compounding divide a firm inhabits. The firms now accelerating away share one property that has almost nothing to do with their AI strategies and almost everything to do with decisions made years before those strategies existed: their data was accessible enough, their workflows legible enough to a machine, that the agent could be deployed without first requiring a complete architectural reconstruction. The firms that cannot scale AI — and there are many of them — are confronting a condition described with unusual precision in the Anthropic Economic Index research published in March 2026. Analysing real professional usage of Claude across occupational categories, researchers Maxim Massenkoff and Peter McCrory constructed a measure of “observed exposure” — the share of tasks that AI is actually performing in professional settings — and set it against the theoretical capability of what AI could already do. The resulting picture is the clearest quantification of the adoption divide yet assembled.

For computer and mathematical occupations, AI is theoretically capable of handling 94% of tasks. Observed professional usage covers 33%. For business and finance, theoretical capability approaches 95%; observed usage sits below 40%. The gap holds across every knowledge-intensive category: legal, management, architecture, office administration. The red area of actual deployment, as the paper describes it, barely registers against the blue expanse of what is already possible. The researchers’ reading is measured: as capabilities advance, adoption spreads, and deployment deepens, the red will grow to fill the blue. Their data on the labour market consequences is more pointed. There is no systematic increase in unemployment for highly exposed workers — the gale is not yet destroying existing jobs at scale. Its primary effect is different, and in some ways more consequential: a 14% drop in job-finding rates for workers aged 22 to 25 in occupations with high AI exposure. The door is not being slammed shut on those already inside. It is being quietly closed against those trying to enter.

Why does the gap between theoretical capability and observed deployment persist at such scale? The blockers the Anthropic research identifies are precisely those I described in IT Spaghetti: fragmented data that the agent cannot access, workflows built around human mediation that have never been redesigned, operating model inertia that prevents the localised pilot from scaling to enterprise-wide impact. These are not technology failures. They are the accumulated residue of rational decisions — each one defensible in its moment, each one incrementally deepening the cost of the transition that was always coming. The firms now accelerating away are those that accepted the cost of resolving this before the urgency arrived. The firms in the gap are those that deferred it until the urgency is here — and are discovering that urgent remediation of accumulated architectural debt cannot be completed in the time available before the compounding advantage of those who moved first becomes a different category of distance entirely.

In Facing the Innovator’s Dilemma, I drew the structural analogy to Apple’s iTunes error: the decision to protect the USD0.99 download because its unit economics were excellent, right up to the moment Spotify made the unit irrelevant. The biological seat is the USD0.99 download of enterprise software. It was rational to protect it. It is now the mechanism of displacement. The firms that moved first did not do so because they were prescient. They did so because they accepted the cost of remediation before the cost of delay became visible — before the Morgan Stanley announcement, before the Block announcement, before the headline about the largest peacetime workforce reduction in American history. The cost of delay is now visible. The question is whether enough runway remains to act.

The Klarna Lesson and the Cost of Getting It Wrong

Klarna is the most analytically useful firm in the early adopter cohort precisely because it failed publicly and recovered productively, and because the failure illuminated something that pure success stories conceal: the location of the remaining spaghetti, even within a firm that moved first and moved fast.

The Swedish fintech became an early adopter of OpenAI’s enterprise tools in 2023. By 2024, as Computer Weekly reported in November 2025, it had reduced its workforce from 5,500 to below 3,000, with AI handling the work of 700 full-time customer service agents. Revenue roughly doubled relative to 2022. Then the model overshot. Service quality fell. Customers complained of generic, repetitive responses unable to handle nuanced or emotionally sensitive queries. By mid-2025, as Mind the Product reported, CEO Sebastian Siemiatkowski acknowledged the error without equivocation: “We went too far.” Rehiring began on what he described as an Uber-style model — flexible, remote human agents blended back into the AI infrastructure already in place.

The press treated this as a cautionary tale about AI overreach. It was more precisely the discovery of where the spaghetti still ran. Klarna had built clean enough architecture to automate high-volume, low-complexity customer interactions successfully. It had not yet built the interpretability layer — the governance design that identifies which tasks genuinely require human cognitive mediation and which do not — that would allow it to extend into more ambiguous territory without degrading trust. The reversal refined the model rather than abandoned it. By November 2025, AI was handling the work of 853 full-time staff — up from 700 earlier that year — while quarterly revenue had reached USD903mn, more than double the equivalent period three years prior. The correction had been absorbed, and the trajectory resumed at a steeper angle.

Klarna’s arc — overshoot, visible failure, calibration, resumed acceleration — is the pattern of a firm moving from tool adoption to architectural reorganisation through the friction of a public mistake. Shopify’s Tobi Lütke attempted to codify the same lesson before rather than after the error. In April 2025, as Fortune reported, Lütke issued an internal mandate: teams must demonstrate that AI cannot perform a task before requesting additional headcount. The agent is the default. The human must justify its presence. This is a profound inversion of traditional hiring logic, and it is only enforceable honestly if the data architecture beneath it is clean enough that the agent can actually do the work being asked of it. Where the architecture is fragmented, the mandate produces the DOGE outcome rather than the Klarna outcome. Where the architecture is sound, it produces the second half of the Klarna trajectory — resumed growth on a structurally smaller cost base.

The deeper lesson, however, comes not from the large company calibrating its AI deployment but from what a single developer with a clear product vision can now do with no manual code at all. Theo, the founder and developer behind t3.gg, documented this in a video titled Software Engineering is Dead Now. The claim that attracted the most attention: he built Lawn, a video review platform with functionality comparable to Frame.io, in two weeks, part-time, without writing a single line of code manually. Frame.io took years of development and was acquired by Adobe for USD1.3bn. The core functionality — video uploading, review workflows, commenting, approval chains — now takes two weeks and costs essentially nothing in development labour.

Theo’s analysis of what this means is pointed. Code used to be the most expensive part of the software pipeline. It is now free and nearly instant to generate. The bottleneck has moved upstream to QA, testing, review, and architectural judgment — precisely the functions requiring human taste and interpretability that no agent can yet fully substitute for. A small flat team of one to three people can now out-ship a large organisation because it lacks the approval processes, the coordination overhead, and the organisational inertia that large teams accumulate. Dorsey’s formulation — “smaller and flatter teams” — is not primarily about cost reduction. It is a structural recognition that the relationship between organisational size and output velocity has reversed: the firms carrying the largest headcounts for engineering and back-office work are now, for the first time, at a competitive disadvantage relative to much smaller, architecturally cleaner operators.

This is the micro-level expression of precisely what the Block and Morgan Stanley announcements signal at macro level. The acceleration within the early adopter cohort is not simply a matter of doing the same things with fewer people. It is a recognition that the organisational architectures built to manage the constraints of the previous era are now themselves the constraint — and that dismantling them, whilst painful, is the precondition for running at the pace the tools make possible.

The Bureaucratic Test

The most extreme expression of the adoption thesis in 2025 and early 2026 was not in fintech or financial services. It was in the federal government of the United States, where the Department of Government Efficiency — DOGE — orchestrated what the Cato Institute subsequently described as the largest peacetime workforce reduction on record. As Bloomberg reported in January 2026, the federal civilian workforce fell from 3.015 million employees at the start of 2025 to 2.744 million by November — a reduction of roughly 9%, representing 280,000 roles eliminated or planned across 27 agencies. Elon Musk, who led the effort until his departure from the administration in May, framed it in terms Schumpeter would have recognised: “the chainsaw for bureaucracy.” The ambition was not incremental. It was structural, or presented itself as such.

The outcome is now documented. The Cato Institute’s end-of-year analysis concluded that DOGE had “no noticeable effect on the trajectory of spending,” with federal outlays rising nearly 6% over the same period that headcount fell 9%. Savings targets were revised repeatedly downward — from Musk’s initial pledge of USD2tn in annual savings, to USD1tn, to USD150bn — as the Hamilton Project’s real-time tracking showed spending surpassing 2024 levels in December with three weeks still remaining in the year. The agencies cut most deeply generated genuine savings that were promptly overwhelmed by increases elsewhere. Mandatory spending on Social Security, Medicare, and debt interest — the structural core of the federal budget — was entirely untouched by any of it.

The point is architectural rather than political. What DOGE encountered, at sovereign scale, is precisely the spaghetti problem that the October 2025 note described at enterprise scale: the human was not the cost. The human was the missing API — the institutional memory that knew which form connected to which database, which override applied to which edge case, which countersignature a process required before it could advance. As one General Services Administration manager told NPR in March 2025, describing a 63% headcount reduction targeted at the Public Buildings Service: “There’s just been no time. It appears that the agency is just cutting buildings and people without even analysing anything.” Remove the interpreter without first redesigning the system the interpreter was navigating, and the system does not become efficient. It becomes slower, more opaque, and increasingly dependent on tacit knowledge that left with the people who carried it.

DOGE did deploy an AI tool, as Fortune reported in March 2025, aimed at automating some government tasks. A chatbot layered over unreformed federal IT infrastructure produces a faster version of the same dysfunction rather than a resolution of it. The federal government is, in the most literal sense, the world’s deepest accumulation of legacy systems, incompatible databases, paper-dependent workflows, and procedural logic embedded in the institutional memory of career civil servants whose departures create a void that no language model, however capable, can fill without the architectural foundation to support it.

The contrast with the private sector early adopters is exact. Block, Klarna, and Shopify all built AI fluency and data architecture before the structural reduction decisions were taken. Architecture first, integration second, structural reduction third: this is the sequence that produces efficiency gains rather than spending increases. DOGE ran the sequence in reverse — structural reduction first, in the hope that efficiency would follow — and produced the largest peacetime workforce reduction on record alongside an increase in outlays. The lesson is not that governments cannot adopt AI. It is that the precondition respects no institutional ambition, and that removing the human who was mediating the spaghetti does not remove the spaghetti. It simply leaves it unmediated.

The Speed of the Frontier

Here the note requires a moment of genuine candour about the pace at which the frontier is moving, because the cases above illustrate the adoption gap from the perspective of those on the wrong side of it. What the Microsoft case illustrates is something more unsettling: the speed at which the frontier moves is now fast enough that even the firms investing at frontier scale cannot build internally at the pace the external ecosystem is generating capability.

Microsoft invested USD13bn in OpenAI. It built GitHub Copilot into a product deployed, by its own account, across 85% of Fortune 500 companies. It spent years positioning Copilot as the definitive AI productivity layer for the enterprise. Then, as The Verge reported in early 2026, Microsoft began deploying Claude Code — Anthropic’s agentic coding tool, built by a direct competitor — internally across its own engineering teams, with even non-developers encouraged to use it. The centrepiece of Microsoft’s Wave 3 platform update, Copilot Cowork, was built not on OpenAI’s models but on Anthropic’s Claude, following a USD30bn Azure compute deal announced in November 2025. The product that Microsoft is presenting as its flagship agentic offering runs on a competitor’s model, delivered through a partnership whose scale suggests this was not a provisional or marginal decision.

Ethan Mollick of Wharton, writing about the Copilot Cowork launch in GeekWire in March 2026, noted that Anthropic’s standalone Cowork product had been built in a matter of weeks using Claude Code and was evolving rapidly — then asked whether Microsoft, with what he described as “a tendency to launch a leading product and then let it sit for a while,” could match that pace. The question is its own answer. Anthropic’s product: built in weeks, updating continuously. Microsoft’s equivalent: months in development, requiring a USD30bn infrastructure partnership to deliver. The firm that put AI tools into 85% of Fortune 500 companies needed a rival’s tool to keep its own engineering organisation at the frontier.

This is Schumpeter’s gale operating inside a single balance sheet. The destroyer is partially destroyed by the thing it helped create, within its own walls, and it reaches for the competitor’s product to close the gap. The implication for every firm below Microsoft in the capability distribution is not reassuring. If a firm with USD13bn invested in the leading AI laboratory cannot build internally at the speed the external ecosystem is advancing, the question for the organisation that deployed a chatbot in 2023 and has been running pilots since is not “are we keeping up” but “how large has the gap become whilst we were deciding.”

Theo’s observation from Software Engineering is Dead Now lands here with particular force.

When he built Lawn in two weeks using agentic tools, he was not doing something extraordinary. He was doing something that any developer with a clear product vision and clean enough architecture can now do. The extraordinary thing is not the capability. It is the speed at which the capability has moved from remarkable to routine — and the consequent acceleration of the gap between organisations built to operate at that speed and those still running approval processes designed for a world where code was expensive and time-consuming to produce. Frame.io took years and USD1.3bn. Lawn took two weeks and zero manual code. The economics of software production have not merely improved. They have inverted. And the organisational structures built around the old economics are now, in Theo’s formulation, “bureaucratic inertia” — the very thing that prevents large organisations from out-shipping the flat team.

The Arithmetic of Acceleration

The distance between the early adopter cohort and the firms still running pilots is no longer a matter of competitive positioning in the conventional sense. NBIM — Norges Bank Investment Management, the world’s largest sovereign wealth fund at USD2tn under management — is the most data-rich illustration of where the compounding advantage now stands for an institution that moved early with genuine architectural commitment.

Nicolai Tangen has been public about the numbers in a way that few asset managers are, and his formulations deserve the same careful reading as Dorsey’s. As Bloomberg reported in May 2025, NBIM’s employees reported a 15% increase in efficiency in 2024 as a direct result of AI adoption. Tangen projected 20% in 2025 and another 20% the year following. The fund reduced its workforce from 1,079 employees at the end of 2023 to 676 by the end of 2024 — a reduction of 37%, achieved entirely through a hiring freeze rather than active redundancies: natural attrition was simply not replaced. Tasks that previously required days — monitoring news about portfolio companies across 16 languages, structuring the information into a coherent overview — now take minutes. Executive pay package documents of 40 to 50 pages are fed into the system alongside NBIM’s voting guidelines and its history of previous decisions; the output is a voting recommendation with roughly 95% accuracy. Trading cost savings from AI-assisted execution already run to hundreds of millions of kroner annually, against a target of USD400mn.

Claude, built by Anthropic, is the primary tool — used by 100% of NBIM’s employees, integrated across all devices in the organisation. Tangen’s mandate is unambiguous. As Bloomberg reported from his interview: “It isn’t voluntary to use AI or not. If you don’t use it, you will never be promoted. You won’t get a job.” When asked about the competitive implications, he told Bloomberg: “If we compete with companies that are not using AI, we’re 50% ahead. It’s unbelievable. They will never catch up.” At Arendalsuka in August 2025, as Top1000funds.com reported, he described the urgency still more directly: “There are too many companies where it’s not happening enough, and if you’re not involved now, you’re falling behind and you’ll never get back on the offensive.”

The compounding mechanism beneath NBIM’s numbers deserves to be stated precisely, because it is the feature that distinguishes this technological transition from the electricity diffusion that economists typically cite as the reassuring historical analogue. When electricity spread through American manufacturing in the early twentieth century, the productivity gap between early and late adopters eventually converged: the technology became infrastructure, available at similar cost and capability to all, and the advantage of early adoption dissipated as the technology became universal. Most commentary on AI adoption implicitly assumes this pattern will repeat.

The AI adoption gap has a structural feature that electricity diffusion did not possess. The advantage does not simply accrue to those who adopt the technology. It compounds within them. An institution that has been running AI against clean institutional data for two years — as NBIM has, as Block has, as NBIM’s portfolio managers have been training the Investment Simulator against their own trading patterns since 2023 — generates outputs that feed back into the next cycle of prompting. Richer context produces more differentiated synthesis. More differentiated synthesis, accumulated across hundreds of thousands of interactions, becomes the proprietary reasoning capacity that Satya Nadella described at Davos in January 2026: the ability to embed the tacit knowledge of the institution into model weights the organisation actually controls. Every cycle, the leaders’ reasoning engines grow more differentiated from a generic model. The laggards, working with generic models against fragmented data, produce generic outputs. The gap widens geometrically, because the inputs to the next cycle are a function of the quality of the previous one.

Tangen’s 213,000 hours saved annually is not a ceiling. It is the return on 2024’s investment, calculated before 2025’s agentic tools existed and before 2026’s more capable models were deployed against the same architecture. The firms that built institutional infrastructure in 2022 and 2023 are now compounding against a capability base that did not exist when they started. The firms beginning their architectural remediation now are starting a compounding process that the leaders began three years ago, against a more capable baseline, with none of the accumulated context. Dorsey’s formulation — “intelligence tool capabilities are compounding faster every week” — is not rhetorical. It is a precise description of the mechanism that has been running inside Block, inside NBIM, inside the early cohort, quietly and without announcement, for two to three years. The Block announcement was not the moment of adoption. It was the moment when the compounding had become large enough that the previous organisational structure could no longer be economically justified. The announcement follows the demonstration. It does not precede it.

What Schumpeter Got Right, and What He Did Not

Schumpeter was right about the gale, and right that it would be perennial. He was right that the agent of destruction is also the agent of creation, and that the discomfort of the transition is inseparable from the eventual advance. What he did not fully map is that this particular gale carries a property that no prior wave of creative destruction possessed: it learns. And because it learns, the gap it creates does not close in the way that previous technological transitions eventually closed.

The Microsoft case is the sharpest illustration. Here is a firm that invested USD13bn to be at the frontier of the intelligence transition. It built Copilot into the productivity layer of the enterprise world. It is, by any measure, among the best-positioned organisations on earth to benefit from this wave. And yet: its flagship agentic product runs on a competitor’s model; its internal engineering teams have adopted a competitor’s coding tool; and Ethan Mollick publicly questioned in March 2026 whether Microsoft’s development cadence could match the pace at which Anthropic was updating the very product Microsoft had built its Wave 3 strategy around. This is not a failure of Microsoft. It is a demonstration of how fast the exponential is moving — fast enough that even a firm at the frontier, with essentially unlimited capital and the world’s most widely deployed enterprise AI product, encounters gaps between its own tooling and the external ecosystem’s pace of advance.

What Schumpeter also did not anticipate is that the destruction and the creation can occur simultaneously within a single institution — that the same firm can be both the agent of disruption in one domain and the subject of it in another, without this constituting a paradox. Block disrupts payment processing with AI whilst simultaneously disrupting its own engineering and operations organisation with the same tools. Microsoft disrupts enterprise productivity with Copilot whilst its own engineering organisation reaches for Claude Code to manage the technical debt that vibe coding has produced internally. These are the logical consequences of a gale that does not respect organisational boundaries — that accelerates through institutions as readily as it accelerates across them.

The electricity analogy fails precisely here. Electricity did not learn. A factory that installed electric motors in 1910 did not accumulate a differentiated electricity advantage relative to one that installed them in 1920. Both consumed an identical input from the grid. The AI-sovereign institution of 2024 is not consuming an identical input from a grid. It is consuming an input that improves with use, whose improvement feeds back into the institutional intelligence that makes the next cycle more valuable, and whose accumulated context is proprietary in a way that electricity never was. NBIM’s reasoning engine is not the same as a generic Claude deployment. It has been trained, through hundreds of thousands of interactions, against NBIM’s specific data, guidelines, voting history, and institutional preferences. That differentiation is not available for purchase. It has to be compounded.

Tangen’s “they will never catch up” is, in this light, not a boast. It is a description of the mechanism, stated by someone who has been watching it operate from the inside for three years. The firms that are 50% ahead are not 50% ahead because they have better AI tools. They are 50% ahead because their tools have been compounding against their institutional data for long enough that the gap has become structural rather than technical — a difference in kind rather than degree. Schumpeter’s gale, in his original formulation, eventually settled into a new equilibrium that all participants could inhabit. The gale of 2026 does not settle. It accelerates. And the second derivative — the rate of acceleration of the gale itself — is the most important number in enterprise strategy, because it determines how much time remains before the gap becomes the kind of distance that capital and ambition alone cannot close.

The Map of What Comes Next

The Anthropic Economic Index paper makes an observation that has received less attention than the capability gap numbers, but which may be the most important finding for anyone thinking about the next decade. There is no systematic increase in unemployment in highly exposed occupations. The gale is not yet eliminating jobs at scale. Its primary effect is the one described earlier: a 14% drop in job-finding rates for workers aged 22 to 25 in highly exposed roles. The door is not being closed on those already inside. It is being quietly shut against those trying to enter. The most exposed workers are more likely to be older, more educated, higher-paid, and 16 percentage points more likely to be female — a demographic profile that does not match the popular image of AI displacement, and which has significant implications for how the divide compounds into the labour market over the coming decade. NBIM’s workforce fell 37% not through redundancies but through a hiring freeze maintained whilst productivity rose. The firms on the right side of the divide are not eliminating their existing workforce at scale. They are allowing it to contract whilst their output expands — and closing the door to new entrants who would previously have joined to absorb the growth.

The winners of this transition are identifiable. They are the firms that own the System of Record — the authoritative source of enterprise truth that agents must read from and write to regardless of how the interface layer evolves. As I argued in The Mispriced Moat in February 2026, the February software selloff fundamentally misread the agentic era: agents do not make the System of Record obsolete, they make it indispensable. Salesforce, SAP, Oracle’s data layer — the platforms at the authoritative centre of enterprise information — are strengthened by the transition precisely because every agent requires a foundation of truth to function. The data moat deepens as the interface layer thins. The firms that have built the orchestration layer — connecting disparate systems of record into a coherent intelligence infrastructure — occupy a premium position with no obvious challenger at scale. The supply chain upstream of the hardware cycle: TSMC earning its revenue at the moment of manufacture, carrying none of the obsolescence risk; ASML as the indispensable bottleneck in the lithography progression. The regulated vertical AI platforms trained on 20 years of proprietary domain data that generalist models cannot access sit behind a moat that is fundamentally different from the moat of general-purpose AI: they are not competing on model capability but on institutional trust, regulatory compliance, and the depth of domain-specific context that took years to accumulate.

The losers are equally visible. The UI-only point solutions — the project management dashboards, the invoicing tools, the workflow automation platforms that existed as an interface layer over undifferentiated data — are genuinely at risk in precisely the way the February selloff intuited, even if the indiscriminate application to all software was analytically poor. Agents do not need interfaces: they read from and write to the data layer directly, and as Theo demonstrated, a single developer can now build a functional replacement for a USD1.3bn enterprise platform in two weeks at negligible cost. The hardest category — and the most consequential for the sheer scale of what is at stake — is the large enterprise with genuine institutional data: valuable, proprietary, embedded in workflows that actually matter, but legacy architecture that prevents that data from becoming machine-legible at scale. These are the firms for which the window is most urgent and the path most demanding. Their data moat is real. Their ability to deploy it is constrained by decisions not taken over the preceding decade.

The firms that will make this transition successfully are those whose leadership accepts, with the honesty that Dorsey displayed and that Tangen has maintained for three years, that the previous organisational model was designed around a constraint that is dissolving — and that the dissolution is compounding faster every week. The firms that will not are those still treating AI adoption as a technology project rather than an institutional reorientation; those running pilots as a way of appearing to engage with the transition without making the architectural commitments that would allow the transition to actually occur.

The Microsoft case offers the final clarifying observation. Here is the firm with the largest AI investment in corporate history, the deepest enterprise distribution, and the most sophisticated AI product suite in the market. It looked at the gap between where its own tooling had arrived and where the frontier had moved, and it responded not by defending the position but by paying whatever was required to close it. The USD30bn Azure deal with Anthropic is not primarily a compute transaction. It is a statement about pace: that the frontier is moving fast enough that even Microsoft cannot afford to insist on building everything internally. The willingness to see the gap honestly and accept the cost of closing it — rather than defending the position that created it — is the disposition that separates the firms accelerating away from those watching from a pilot programme.

Tangen’s description of his own approach is worth sitting with. He told the Feedforward podcast that he has been running around “like a maniac” since 2022 trying to convince his staff to adopt AI — making it mandatory for career advancement, embedding it in performance reviews, running hackathons, deploying AI ambassadors across every office. He achieved 100% usage and 50% advanced usage. The efficiency gain was 15% in year one. The projection is 20% in years two and three. The trajectory does not flatten. It accelerates as the tools improve against the same architecture. Not a strategy document. Not a pilot programme. Not a chief AI officer appointed to produce a roadmap. A chief executive who accepted, three years ago, that the tools would compound and that the only way to be on the right side of the compounding was to start the clock running — and to make the architectural commitment that allows the compounding to actually compound.

That moment has now arrived for the broader market. The Block and Morgan Stanley announcements are not the beginning of the AI disruption of white-collar work. They are the first quarterly reports from an adoption cycle that started in 2022 and has been compounding quietly ever since. The firms that started the clock in 2022 are now, in Tangen’s formulation, 50% ahead of those that did not. The firms that start now will compound against a more capable baseline, which is both an advantage and a constraint — the gap to close is proportionally larger. The firms that do not start are not standing still. They are falling further behind at an accelerating rate, because the clock on the other side does not stop.

Schumpeter’s gale is not waiting for the ground to be prepared. It is compounding faster every week. The only honest response to that observation is to ask, with more urgency than most institutional cultures permit, whether the architectural preparation has begun — and, if it has not, what precisely is being waited for. The investigation, as ever, continues

Pancras

— all views expressed are my own —

SOURCES

Financial press & commentary

· Jack Dorsey, shareholder letter and analyst call, Block Inc., Bloomberg, February 27, 2026

· Hannah Levitt, “Morgan Stanley Plans Job Cuts Affecting About 2,500 Employees,” Bloomberg News, March 4, 2026

· Charles Gasparino, “Wall Street executives say Morgan Stanley’s latest layoffs caused by AI,” New York Post, March 7, 2026

· Morgan Stanley study forecasting 200,000 EU banking job losses by 2030, PYMNTS, March 5, 2026

· Klarna AI workforce reduction and revenue doubling, Computer Weekly, November 2025

· Klarna CEO admits aggressive AI cuts went too far, rehiring announced, Mind the Product, September 2025

· Shopify CEO AI mandate: proof required before new headcount, Fortune, April 2025

· Microsoft Copilot Cowork built on Anthropic’s Claude, VentureBeat, March 9, 2026

· Microsoft deploys Claude Code across internal engineering teams, The Verge, 2026

· Ethan Mollick commentary on Copilot Cowork vs Anthropic Cowork development pace, GeekWire, March 9, 2026

· Microsoft USD30bn Azure compute deal with Anthropic, November 2025

· DOGE federal workforce reduction: 280,000 roles, Bloomberg, January 2026

· DOGE spending analysis: no noticeable effect on spending trajectory, Cato Institute / Yahoo Finance, December 2025

· GSA manager on DOGE cuts, NPR, March 2025

· DOGE AI tool deployment, Fortune, March 2025

· Satya Nadella, CEO of Microsoft, fireside discussion with Larry Fink, Chairman and CEO of BlackRock, World Economic Forum, Davos, January 2026

· Nicolai Tangen, Bloomberg interview on NBIM AI mandate and competitive advantage, May 2025

· Nicolai Tangen at Arendalsuka on AI urgency, Top1000funds.com, August 2025

· NBIM workforce reduction from 1,079 to 676 employees, Chief Investment Officer magazine, June 2025

· NBIM hiring freeze announced following AI productivity gains, Fortune, May 2025

· NBIM 15% efficiency gain, 20% projection, USD400mn trading cost savings target, Bloomberg Norway Wealth Fund mandate reporting, May 2025

· Nicolai Tangen, Feedforward Member Podcast, “AI Madness: Nicolai Tangen’s Journey to 100% AI Adoption,” 2025

· Theo (t3.gg), Software Engineering is Dead Now, YouTube, 2026

Industry & policy reports

· Maxim Massenkoff and Peter McCrory, “Labour Market Impacts of AI: A New Measure and Early Evidence,” Anthropic Economic Index, March 2026

· McKinsey Global Institute, The State of AI in 2025: Agents, Innovation, and Transformation, November 2025

· San Francisco Federal Reserve, “The AI Moment: Possibilities, Productivity, and Policy,” February 2026

Academic research

· Joseph Schumpeter, Capitalism, Socialism and Democracy, Harper & Row, 1942

Sovereign Index series

· “IT Spaghetti,” Pancras Beekenkamp, October 16, 2025

· “Capital in the Age of Enterprise AI,” Pancras Beekenkamp, October 9, 2025

· “Facing the Innovator’s Dilemma,” Pancras Beekenkamp, January 20, 2026

· “The Mispriced Moat,” Pancras Beekenkamp, February 20, 2026

If there is any industry that doesn't earn its fees, it is the wealth "management" industry. AI will likely do a far better job than the humans currently involved.